Outliers on six dimensions

Outliers on six dimensionsAbstract

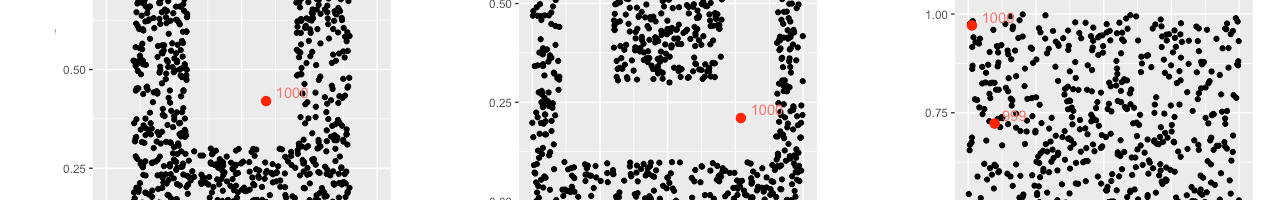

Detecting outliers in a dataset is a problem with numerous applica- tions in data analysis for fields such as medical care, finance, and banking or network surveillance. But in a majority of use-cases, data points are described by a lot of features, making outlier detection more complicated. As the num- ber of dimensions increases, the notion of proximity becomes less meaningful: this is due to sparser data and elements becoming almost equally distant from each other. Medical datasets add a layer of complexity caused by their hetero- geneous nature. Because of these caveats, standard algorithms become less rele- vant when hundred of dimensions are involved. This paper discusses the benefits of an outlier detection algorithm that uses a simple concept of one-dimensional neighborhood observations to circumvent the problems mentioned previously.